AI Act: less than 100 days left — what European companies need to do

Jorge García

Tecnea

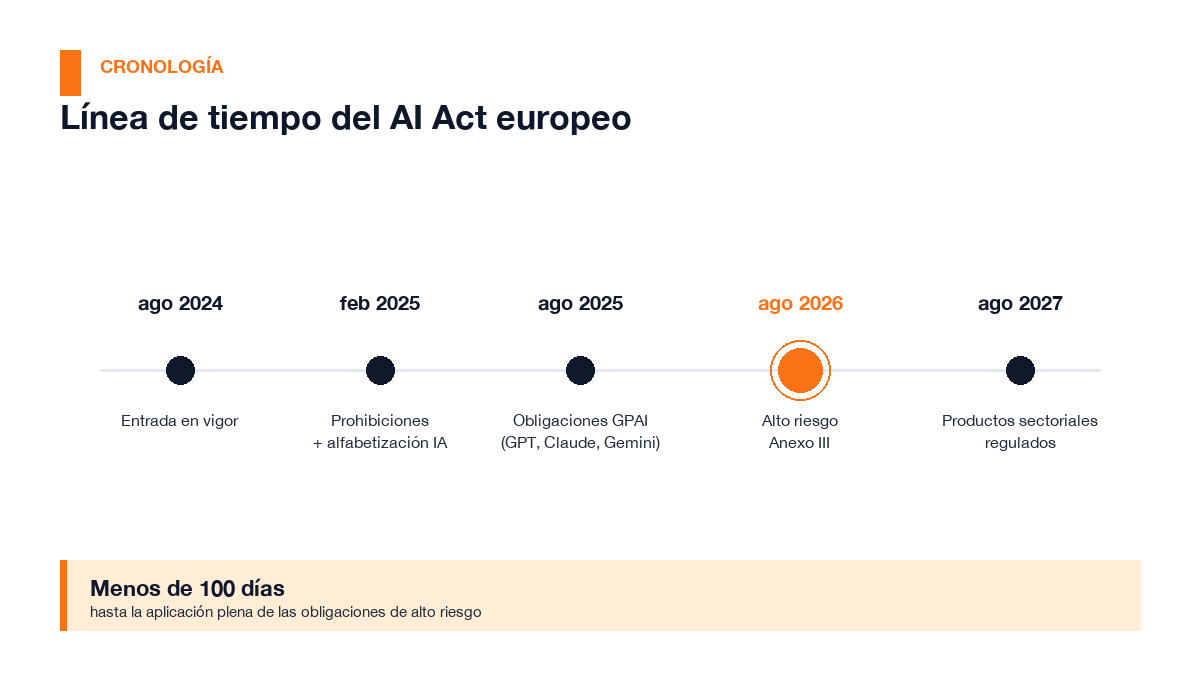

Less than one hundred days remain until 2 August 2026. That date marks the full application of the obligations of the European AI Act for most artificial intelligence systems deployed in companies. It is not a symbolic date: on that day the national supervisory authorities designated across the EU will have full powers of inspection and sanction, and the European Commission will activate its ability to fine the providers of large AI models.

The question technology and operations leaders in any mid-sized European company should be asking themselves right now is not whether the AI Act affects them. The question is: how many high-risk AI systems do you have deployed without realising it?

The answer, in many cases, will surprise you.

The full timeline: what already applies and what arrives in August

The AI Act has been entering into force in layers since August 2024. The critical date for most companies is 2 August 2026.

The AI Act has been entering into force in layers since August 2024. The critical date for most companies is 2 August 2026.

The AI Act did not arrive all at once. It has been entering into force in layers for two years, and most of the critical obligations are concentrated precisely now:

- August 2024 — The Regulation officially enters into force.

- February 2025 — Absolute prohibitions (Art. 5): subliminal manipulation, exploitation of vulnerabilities, mass social scoring. Obligation of AI literacy (Art. 4) for all personnel working with AI systems.

- August 2025 — Obligations for general-purpose AI models (GPAI): GPT, Claude, Gemini, Llama. Governance rules and technical documentation.

- 2 August 2026 — Full obligations for high-risk systems (Annex III). Commission powers to sanction GPAI. Transparency rules.

- August 2027 — High-risk systems embedded in products already regulated by sectoral legislation (machinery, medical devices…).

There is a nuance few articles mention: some stand-alone Annex III systems actually have until December 2027. But that is no excuse to wait: system classification is mandatory for everyone now, and technical documentation and risk management processes take months of work.

Do you have high-risk systems without knowing it?

The 8 Annex III categories. The most frequent in mid-sized European companies are Employment/HR and Essential Services (credit, insurance).

The 8 Annex III categories. The most frequent in mid-sized European companies are Employment/HR and Essential Services (credit, insurance).

Annex III of the AI Act defines eight categories of systems considered high-risk. You don't need cutting-edge technology to fall into one of them. These are the most frequent in mid-sized European companies:

Employment and human resources

This is probably the category most companies overlook. AI systems that fall under high-risk include those that:

- Automatically filter or score job applications

- Evaluate candidates during selection processes

- Make or assist decisions on promotion, reassignment or dismissal

- Monitor and evaluate employee performance

If your company uses an ATS (applicant tracking system) with automatic scoring, or a People Analytics tool that classifies employees, you probably have a high-risk system.

Essential services: credit and insurance

Also high-risk are systems that:

- Evaluate the creditworthiness or establish the credit scoring of natural persons

- Determine pricing or risk in life and health insurance

This directly affects financial institutions, insurers and any company using AI to decide differentiated commercial conditions per customer.

Other relevant categories

- Biometrics — facial recognition for access control, identification of persons

- Education — systems that evaluate students, decide admissions or automatically grade exams

- Critical infrastructure — AI in managing energy grids, water or transport

- Administration of justice and law enforcement — mainly applies to public administrations

The European Commission has published a self-diagnostic tool, the AI Act Compliance Checker, so any company can assess whether its systems fall under Annex III. It is the mandatory first step, and takes no more than one hour per system.

The Q2 2026 update: AI agents are also covered

In April 2026, the European Commission formally clarified that AI agents do not constitute a separate legal category. The existing definitions in the Regulation — «AI system» and «general-purpose AI model» — are sufficient to cover them.

The practical consequence is clear: if you have deployed an agent based on Copilot, OpenAI Agents, CrewAI or any other orchestrator, and that agent performs functions that fall under Annex III (for example, filtering candidates, evaluating creditworthiness or monitoring employees), exactly the same obligations apply as to any other high-risk system.

This clarification comes at a time when many companies are deploying AI agents rapidly, often without having carried out the prior risk assessment the Regulation requires.

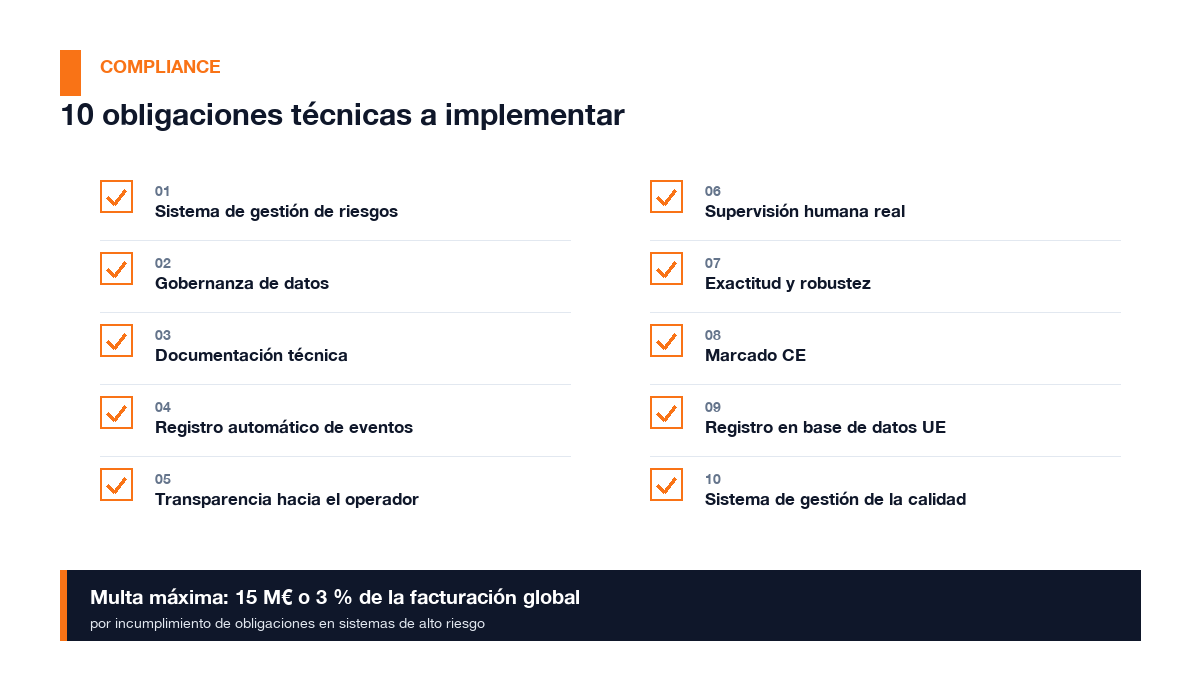

The 10-obligations checklist for high-risk systems

Each of these ten points requires prior work. None of them can be implemented in a week.

Each of these ten points requires prior work. None of them can be implemented in a week.

If one or several of your systems fall under Annex III, these are the obligations you must have implemented before 2 August:

- Risk management system — continuous, documented process, not a one-off analysis

- Data governance — quality, representativeness and origin of training data

- Technical documentation — prepared before deployment: what the system does, what data it uses, how it was evaluated

- Automatic event logging — logs that allow reconstruction of the system's decisions

- Transparency to the operator — instructions for use, known limitations, required level of supervision

- Real human oversight — effective intervention mechanisms, not merely formal

- Accuracy and robustness — documented and monitored accuracy metrics

- CE marking — mandatory for systems placed on the European market

- Registration in the EU database — required for most Annex III systems

- Quality management system — internal process that ensures continuous compliance

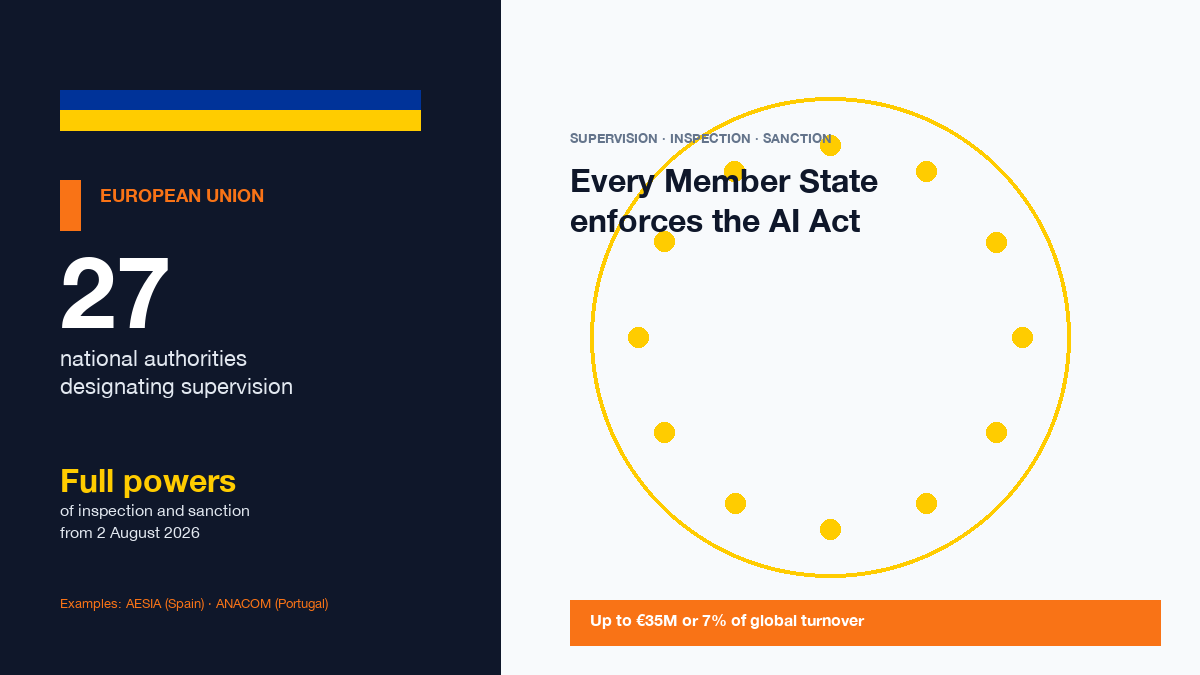

National supervisory authorities: already in place

Each EU Member State is designating a national competent authority to supervise and sanction AI Act compliance.

Each EU Member State is designating a national competent authority to supervise and sanction AI Act compliance.

Every EU Member State is required to designate one or more competent national authorities to supervise compliance with the AI Act. Spain was the first to do so, creating a dedicated agency (AESIA) in June 2024. Portugal designated ANACOM as principal authority in September 2025, coordinating fourteen sectoral regulators. Other Member States have designated data protection authorities, digital agencies or newly created bodies depending on their administrative structure.

From 2 August 2026, these authorities will have full powers of inspection, investigation and sanction. The fines contemplated in the Regulation are:

- Up to €35 million or 7 % of global turnover (whichever is greater) for prohibited uses

- Up to €15 million or 3 % of global turnover for non-compliance with high-risk system obligations

- Up to €7.5 million or 1.5 % for incorrect information provided to authorities

For a company with €50 million turnover, 3 % is €1.5 million. This is not a theoretical risk.

Our view: the window is now, not in July

At Tecnea we have long seen how business adoption of AI goes much slower than regulation. The AI Act is, in this sense, a reverse case: the regulation is advancing faster than many companies believe.

The good news is that most mid-sized companies do not have dozens of high-risk systems. Frequently, the initial assessment reveals that one or two systems need attention, and that the rest fall outside Annex III.

The mistake we are seeing repeated is waiting for someone from outside — a supplier, a last-minute consultant, a press article — to trigger the urgency. By then, the deadlines for properly preparing technical documentation and risk management processes will have been compressed too much.

The first step — classifying systems — does not require major resources. It requires time and judgement. Those who do it now will reach 2 August with margin. Those who wait until July, probably not.

Frequently asked questions

Does the AI Act affect SMEs? Yes, although with nuances. The obligations apply to any company that develops or deploys AI systems in the EU, regardless of size. There are some procedural simplifications for micro-enterprises, but the substantive obligations — classify, document and manage risks — are the same. Size does not exempt; it can justify lighter processes if the system has lower impact.

Does using ChatGPT or Copilot in my company make me a high-risk provider? Not directly. When you use a general-purpose AI model as an internal tool (drafting documents, summarising information, assisting generic tasks), you are normally a «user» under the Regulation, with lighter obligations. The problem arises when you integrate that model into a decision process affecting people: hiring, credit, performance evaluation. That is when the classification changes.

What exactly is the «AI literacy» obligation in Article 4? Since February 2025, all organisations that use or develop AI systems must ensure their personnel have a sufficient level of understanding of how these systems work, their capabilities, limitations and risks. There is no mandatory format: it can be internal training, workshops or documentation. But it must be recorded.

Are systems bought from tech suppliers the supplier's responsibility or mine? Responsibility is shared. The supplier (manufacturer or distributor of the system) has its own compliance obligations. But as the company deploying the system, you have additional obligations as operator: ensuring the system is used in accordance with its instructions, implementing human supervision and reporting serious incidents. It is not enough for the supplier to say it complies with the AI Act.

What happens if on 2 August I don't have everything in order? It depends on the specific situation. The European Commission and the national supervisory authorities have the power to open investigations, require information, order corrective measures and, ultimately, impose fines. In practice, the first supervisory actions will focus on the most serious or most visible cases. But that does not mean the risk is theoretical: having the documentation and processes in place is the only defensible position before an inspection.

Sources

- Annex III of the AI Act — artificialintelligenceact.eu

- EU AI Act Compliance Calendar — Delbion

- EU AI Act 2026 Updates — Legalnodes

- AI Act — Shaping Europe's digital future, European Commission

- Guidelines for providers of general-purpose AI models

- High-risk AI systems under the EU AI Act — DPO Consulting

¿Te ha resultado útil este artículo?

Publicamos análisis sobre IA y tecnología empresarial. Sin spam — solo cuando escribamos algo que valga la pena leer.

Ready to transform your business?

Let's talk about how we can help you implement these solutions in your company.

Contact us